Check your AI usage dashboards. Really look at them.

BCG's latest research shows frontline AI adoption has stalled at 51%. Down from the initial surge of enthusiasm. Half your employees tried it. Then stopped.

The question isn't whether AI works. The productivity data proves it does. The question is why half your workforce opted out.

Look closer at that 51%. These aren't the people who couldn't figure it out. They're not technophobes. They're competent professionals who tried AI, saw what it could do, and still walked away.

What did they see that made them quit? The answer isn't what most executives assume. It's not about the technology. It's not even about the training — at least not in the way we usually think about training.

The people who succeed don't have better prompting skills. They don't know more about how transformers work. They're not more tech-savvy. They've figured out something else entirely.

They've figured out that AI isn't a tool skill. It's a management skill.

Once you see it, you can't unsee it. And it changes everything about how we need to be training people.

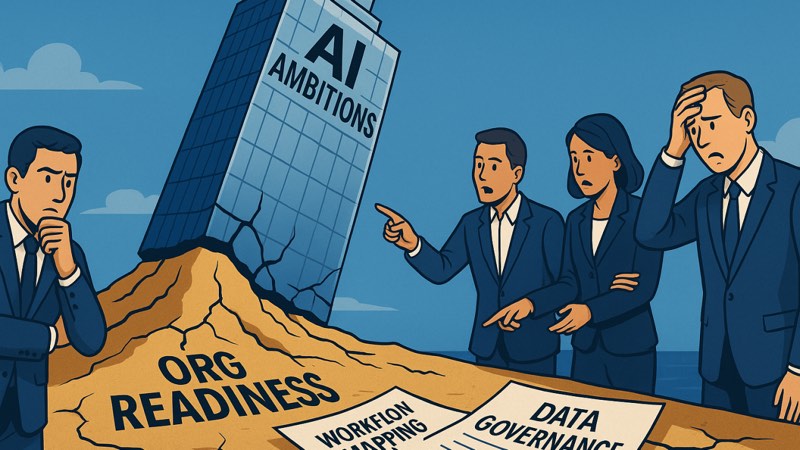

The Gap Nobody's Talking About

The 101 level exploded. Tool tours, prompting fundamentals, generic use cases. Every company can buy that now. The 401 level got covered too: technical implementation, API integrations, RAG architectures, fine-tuning. Your engineering teams are all over it.

But the market skipped two entire levels in between. And the middle is where most of the productivity gains for most people live.

The 201 level is where the question shifts from "how do I use this tool" to "where does this tool fit in my work" and "when do I trust what it tells me." These are judgment skills: context assembly, quality assessment, task decomposition, iterative refinement, workflow integration, frontier recognition.

The 301 level is where organizations build coordination capability: How do teams share knowledge about what works? How do AI outputs from one team become inputs to another? How do we create governance that enables rather than blocks? This is organizational orchestration.

Most companies have brilliant individuals at 201 trying to work with IT teams at 401, with nothing connecting them. The result is predictable: knowledge that never spreads, employees paralyzed by permission anxiety, shadow AI proliferating outside governance.

BCG found that only 36% of employees believe their AI training is adequate. Even more alarming: 18% of regular AI users received no training at all. They're figuring it out on their own. Or they're not figuring it out at all and just accepting whatever AI gives them. Neither is good.

When AI Makes Smart People Worse

Harvard Business School recruited 758 BCG consultants — about 7% of the firm's individual contributor workforce — and ran a pre-registered experiment with real consulting tasks. These aren't junior people. These are professionals companies pay $500+ per hour to solve their hardest problems.

For tasks inside AI's capability frontier, the results were stunning:

- 12.2% more tasks completed

- 25.1% faster completion

- 40%+ higher quality (human-rated)

But for tasks outside that frontier — tasks that looked like AI should handle them but couldn't — something disturbing happened. Consultants using AI were 19 percentage points less likely to get correct answers than those working without AI.

The AI didn't just fail to help. It actively degraded performance.

Karim Lakhani, who co-led the research, was direct about what went wrong: "People use it the wrong way. People use it as an information search tool like Google. This is not Google."

Here's the thing that should terrify you: the people in that study had no way to know which tasks were which. The frontier is invisible. Your employees can't see where AI succeeds and where it fails until they've tried and failed enough times. Most of them gave up before they learned the map.

The Skill That Matters Isn't Technical

Ethan Mollick, one of the study's authors, makes this point clearly: the best users of AI are good managers. Good teachers. The skills that make you effective with AI aren't prompting skills. They're people skills.

Look at the 201 skill set: task decomposition, quality assessment, iterative refinement, calibrated trust. These are management skills. We just don't teach them as management skills. We teach them — if we teach them at all — as tool skills.

The study also found that AI works as a skill leveler. Bottom-half performers improved their output quality by 43% when using AI. Top-half performers improved by only 17%.

On the surface, that sounds like pure upside. Everyone gets better, the performance gap shrinks, your weakest links strengthen. But think about the implications. When the gap compresses from 43% to 17% improvement, what is your competitive advantage? And your bottom-half performers — the ones who benefit most from AI — are also the ones least equipped to recognize when AI is failing.

The Six Skills Nobody's Teaching

There are six 201-level skills that separate AI success from failure. Zero involve prompting techniques.

1. Context Assembly

Knowing what information to provide, from which sources, and why. The 101 user dumps entire documents into AI or provides almost no context. Both produce mediocre results. The 201 user understands that AI is sensitive to context quality — they take time to provide the right background, the right constraints, the right examples.

2. Quality Judgment

Knowing when to trust AI output and when to verify. This operates on two dimensions: knowing which task types require what level of verification, and knowing within a given output which parts are likely reliable versus problematic. AI can confidently state accurate information and hallucinate in the exact same paragraph. The 201 skill is learning to detect that.

3. Task Decomposition

Breaking work into AI-appropriate chunks rather than throwing entire tasks at the tool or avoiding it completely. This is where the management framing helps most directly. You're identifying which subtasks to delegate to AI versus keep yourself — just like you would with a team member.

4. Iterative Refinement

Moving from 70% to 95% through structured passes. The 101 user accepts the first output (and gets AI slop), or tries once, sees mediocre results, and abandons the effort entirely. The 201 user treats the first draft as a starting point. You wouldn't accept an intern's first draft. Same principle applies here.

5. Workflow Integration

Embedding AI into how work gets done rather than treating it as a side tool. The difference shows up in whether AI is a separate activity ("I'll try the AI thing later") or an integrated capability ("This is just how we do RFPs now"). Integration isn't about using AI for everything. It's about AI becoming invisible — a natural part of the process.

6. Frontier Recognition

Knowing when you're operating outside AI's capability boundary. This is the skill that prevents that devastating 19-percentage-point performance drop. It requires building explicit knowledge of where AI excels versus fails for your particular work — and sharing failure cases so the team learns the boundaries together.

The Question Your Employees Are Asking

Here's what's stopping people from using AI. It's not that they can't figure it out. It's that they don't know if they're allowed to.

Your most conscientious employees see AI as risk and avoid it entirely. They're asking: Can I use AI for this client work? Does this violate our data policies? Am I liable if AI hallucinates facts? Will using AI make me look replaceable?

This isn't psychological resistance. This is rational risk assessment in the absence of clear guardrails.

The Protiviti/LSE study quantified this precisely: 93% of employees who receive AI training use AI in their roles. Among those without training? Just 57%. That's a 36-percentage-point gap driven almost entirely by organizational enablement. Even more striking: trained employees save 11 hours per week with AI. Untrained employees save only 5 hours. Training doubles productivity impact.

The gap isn't just a skill gap. It's a permission gap. And your most conscientious employees — the ones who care most about doing good work — are the ones most likely to opt out.

What Works

The organizations breaking through this barrier share common approaches:

- Create AI labs with power users, not just technologists. These need to be lightweight, fast-moving teams that experiment with workflows. They must include employees with no technical background.

- Conduct systematic discovery across functions. Companies that systematically interview departments about how AI might improve their work surface dozens of concrete use cases. Your organization has similar hidden knowledge, but someone has to surface it.

- Make success visible. Run low-stakes competitions. "What's a workflow you've meaningfully improved using AI?" becomes a question you ask on Friday. Surface practical applications. Create social proof.

- Invest in hours, not just access. BCG found that employees who receive more than five hours of formal AI training are significantly more likely to become regular users.

- Define guardrails that say yes, not just no. What data is allowed? How should you disclose AI assistance? What does good look like? So few organizations bother to define positive AI usage.

- Share failure cases systematically. When someone discovers a task that AI handles poorly, that knowledge needs to spread.

The Talent Crisis Hiding Inside Your Productivity Wins

Traditionally, junior employees learned judgment through repetition. They'd draft the memo 10 times before it was good. Analyze the data 50 times before they could spot patterns. The reps built the judgment.

AI compresses that learning curve. But it also eliminates the reps.

The junior analyst who would have spent two years learning to spot data anomalies through manual review now gets AI-generated insights immediately. They're more productive today but haven't developed the pattern recognition that makes senior analysts valuable.

This is the apprentice problem: AI lets people skip the struggle that builds mastery. We have no idea how to develop talent when the traditional learning path has been automated away. When AI handles routine tasks, where do future leaders get the experience that built their predecessors' expertise?

The Gap That Matters

Your employees are already using AI. The value is real. Workers see it. But organizations aren't structured to capture it.

Without intentional work on the 201 level, you get a massive coordination failure. A few people are at 401 and moving fast. Most couldn't care less. The gap between them widens.

Ask yourself honestly:

- Can your people identify which subtasks AI should do versus what they should do?

- Do you have a way to iterate on AI output, not just accept first drafts?

- Has AI been integrated into workflows or is it just a side activity?

- Do you know, for your specific work, where AI fails?

If you can't answer those questions, your people are probably stuck at 101. They're in the trough. And most of them aren't going to figure it out on their own.

The difference between AI activity and AI fluency isn't about the tools you deploy. It's about whether you've invested in the judgment layer that makes those tools reliable.