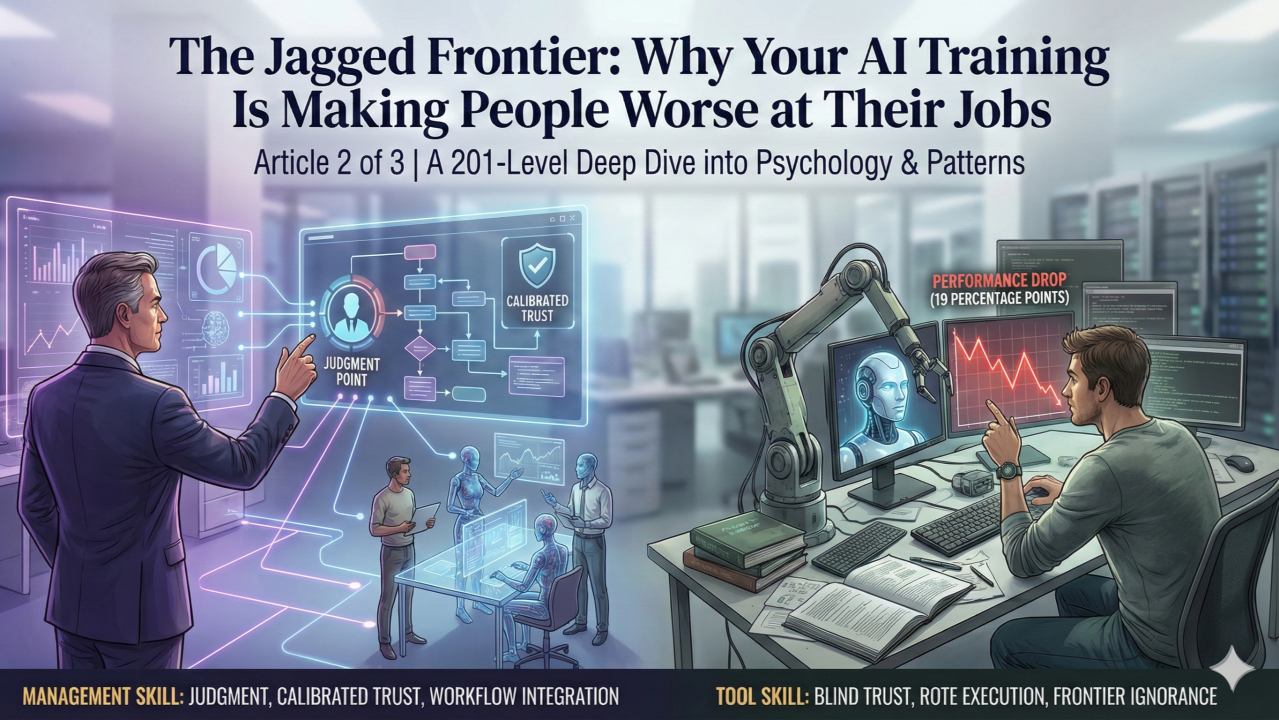

Your best consultants just got worse at their jobs. Not by a little. By 19 percentage points.

Not because they're less skilled. Not because the AI is bad. Because they trusted AI at exactly the wrong moments.

A landmark Harvard study found that BCG consultants using AI were 19 percentage points less accurate than those working without it on routine business analysis — the exact work AI should improve. The researchers discovered why: AI is so good at some tasks that people stop thinking critically at exactly the moment they need to most.

This isn't a training problem. It's a trust problem. And it's happening in your organization right now.

This is a 201-level deep dive. Article 1 introduced the framework: 101 (tool skills), 201 (judgment and integration), 301 (organizational orchestration), 401 (technical implementation). This article focuses on the 201 psychology that determines whether AI makes you better or worse. Understanding the jagged frontier, recognizing when to trust AI, and choosing the right integration pattern are all 201 capabilities most training programs skip.

The Invisible Wall

Imagine a fortress wall with towers jutting out into the countryside while other sections fold back toward the center. That wall represents AI's capability boundary. Everything inside the wall, AI can do. Everything outside is beyond its current reach.

The problem is that the wall is invisible.

Some tasks that logically seem equally difficult fall on completely different sides. Writing a sonnet? Easy for AI. Writing a poem with exactly 50 words? Consistently fails because AI conceptualizes language in tokens, not words. Basic math that any human can do? Surprisingly hard for large language models. Generating creative ideas that outperform human brainstorming? Shockingly easy.

You can't see where the wall is until you've hit it.

This is what researchers call the "jagged technological frontier," and it explains why your AI rollout produced such wildly inconsistent results across teams and individuals.

The Experiment That Proved It

In 2023, Harvard Business School partnered with Boston Consulting Group on one of the most rigorous field experiments ever conducted on AI and knowledge work. The researchers recruited 758 BCG consultants and ran a pre-registered experiment with real consulting tasks spanning creative work, analytical work, writing, and persuasion.

Inside the frontier — on tasks AI handles well — the results were stunning:

- 12.2% more tasks completed

- 25.1% faster completion

- Quality ratings jumped over 40%

But outside the frontier — on a task carefully designed to fall just beyond AI's capabilities — a dangerous pattern emerged:

- Consultants using AI got the answer right only 60–70% of the time

- Consultants without AI achieved 84% accuracy

- AI caused a 19 percentage point performance drop

The AI didn't just fail to help. It actively made smart people worse at their jobs.

Falling Asleep at the Wheel

Fabrizio Dell'Acqua, one of the study's lead authors, has a term for what happened: "falling asleep at the wheel."

Dell'Acqua tested this phenomenon in a separate Harvard study examining recruiters evaluating job candidates. The findings were counterintuitive: recruiters using high-quality AI made worse hiring decisions than recruiters using low-quality AI or no AI at all.

When AI performed well on most tasks, recruiters stopped applying their own judgment. They missed brilliant candidates with unconventional backgrounds — exactly the cases where human judgment matters most. The AI's very competence created dangerous complacency.

In the BCG experiment, consultants saw impressive AI outputs on other tasks and assumed they could trust it on everything. They stopped verifying. They accepted confidently wrong answers because the AI had been confidently right so many times before.

"People use it the wrong way. People use it as an information search tool like Google. This is not Google." — Karim Lakhani, Harvard Business School

Most AI training teaches people to trust AI more, not to calibrate their trust appropriately. We show people the impressive demos. We train them on the tasks where AI excels. We inadvertently teach them that AI is magical, then wonder why they're blindsided when it fails.

The Skill Leveling Effect Cuts Both Ways

The research revealed a massive skill leveling effect: consultants who scored in the bottom half on baseline assessments improved their performance by 43% when using AI. Top-half performers improved by only 17%.

On the surface, this sounds great. AI helps everyone reach higher levels of performance. The gap between your best and worst performers shrinks.

But consider what this means for tasks outside the frontier.

Your bottom-half performers — the ones who benefit most from AI — are also the ones least equipped to recognize when AI is failing. They don't have the deep domain expertise to spot subtle errors. They can't distinguish between AI confidently stating something accurate and AI confidently hallucinating.

Meanwhile, your top performers have the expertise to catch AI mistakes but get a smaller boost. The skill leveling effect may be creating a dangerous dynamic where the people most reliant on AI are least able to verify it.

Centaurs and Cyborgs: The Patterns That Work

Not everyone fell into the trap. The researchers identified two successful integration patterns, named after how humans and non-humans combine: Centaurs and Cyborgs.

Centaur Mode

Centaurs are the mythical creatures that are half-human, half-horse — with a clear dividing line at the waist. You can point to exactly where the human ends and the horse begins. That's how Centaur users work with AI: they maintain a clear division of labor, strategically delegating specific tasks to AI while keeping others firmly human.

A Centaur approach to analysis might look like: Human decides statistical techniques, AI produces graphs. Human frames the strategic question, AI generates option sets. Human handles the judgment-heavy interpretation, AI handles the production-heavy documentation. You can always point to the seam.

Centaur mode works best for high-stakes work where you need clear accountability: legal analysis, medical documentation, financial compliance. Anything where you need to be able to point to exactly what the human verified and what the AI produced.

Cyborg Mode

Cyborgs are humans with integrated machine parts — no clear boundary between where the human ends and the technology begins. That's how Cyborg users work with AI: they blend human and machine work so deeply that the boundary becomes invisible.

Cyborg mode works best for creative and iterative work: brainstorming sessions, content development, design exploration. Work where the goal is generating and refining ideas rather than producing a defensible final answer. The consultants who achieved 40% quality improvements? Cyborg mode.

The Critical Skill: Mode Switching

The 201-level capability isn't mastering one pattern. It's knowing which pattern fits which task and being able to switch between them deliberately. Most people accidentally slip into one mode and stay there. The winners consciously choose their integration pattern for each task type.

The Homogenization Problem

There's one more finding from the research that deserves attention: AI outputs were higher quality but more homogeneous.

When the researchers looked at the variation in ideas consultants produced, AI-assisted work showed less variety than human-only work. The ideas were better on average, but more similar to each other.

Think about the competitive implications. If every company in your industry is using the same AI models, trained on similar data, generating similar outputs — where does differentiation come from? If your strategy team's AI-assisted analysis looks like your competitor's AI-assisted analysis, what's your actual competitive advantage?

The firms capturing outsized value from AI aren't using better models. They're training models on datasets competitors can't access — customer behavior data, operational metrics, market intelligence collected over decades. AI democratizes access to public knowledge. That makes proprietary knowledge more valuable, not less.

What You Can Do About It

- Stop training people that AI is magical. Every AI training should include explicit failure cases. Show people where the frontier is in your specific domain.

- Map the frontier for your work. Your technical experts should be systematically documenting which tasks AI handles well in your context and which it doesn't.

- Create verification protocols based on task type. Not everything needs the same level of human review.

- Share failure cases systematically. When someone discovers that AI consistently fails on a particular task type, that knowledge needs to spread.

- Teach Centaur and Cyborg modes explicitly. Help people recognize which approach fits which situation.

McKinsey's 2025 research on AI transformation confirms this approach works: organizations that fundamentally redesign workflows when deploying AI are 3.6 times more likely to report transformational impact.

The jagged frontier will keep advancing whether you're ready or not. You can teach your people to see the wall before they hit it — or you can keep sending them charging forward blind, wondering why the results are so inconsistent.